The Intelligence Dividend: Everywhere but the productivity statistics?

Also: Tailwind and Open Source Software in the AI era; AI-native Error Messages

Welcome to The Intelligence Dividend, an experimental newsletter about AI, its technology, and the economies it sits within.

Everywhere but the productivity statistics?

One simple way of measuring the economy is to look at how much money it spits out during a given period. This tells us whether a country is getting richer or poorer in absolute terms, but it isn’t especially interesting. It doesn’t tell us what caused that growth. A more interesting way is to compare what the economy produces with how much time went into producing it. This tells us whether a country is getting more efficient, and is the usual measure of labour productivity: output over hours worked.

The American economy is getting remarkably more productive:

Nonfarm business sector labor productivity increased 4.9 percent in the third quarter of 2025, the U.S. Bureau of Labor Statistics reported today, as output increased 5.4 percent and hours worked increased 0.5 percent. (All quarterly percent changes in this release are seasonally adjusted annualized rates.) From the same quarter a year ago, nonfarm business sector labor productivity increased 1.9 percent in the third quarter of 2025. (See tables A1 and 2.)

If output has increased by 5.4%, and hours worked increased by 0.5%, then something made firms produce 4.9% more per hour worked. Is that something AI?

Well, it’s difficult to tell. The Bureau of Labor Statistics, who collate and publish these numbers on a quarterly basis, break the headline numbers down into four sectors which make complete sense and aren’t at all arbitrary and weirdly overlapping: non-farm business, business, non-financial corporate, and manufacturing.

If we look at non-financial corporate (which excludes small businesses as well as financial services), that sector has grown 3% (annualised) in Q3 2025, or 2.8% year over year. This compares to 3.3% and 2.3% in manufacturing and 4.9% and 1.9% in the broader category of non-farm business.

This is peculiar for two reasons. The first is that manufacturing is a famously unproductive bit of the economy. In 2024, the sector’s productivity grew by 0.1%, the previous year it shrank by 0.4% and the year before that it shrank by 2.1%. The last time manufacturing grew by more than a couple of percentage points was 2010.

The second is that non-financial corporate grew much less than the broader category of non-farm business, which includes small businesses. Small businesses are also generally considered to be much less productive than big businesses, so it is surprising that including small businesses would push the overall productivity number much higher than dragging it down.

If AI were responsible for the 4.9% productivity surge, we might expect to see the strongest gains in the non-financial corporate sector (which includes large tech companies, professional services firms, and other big corporates). We definitely wouldn’t expect to see it in manufacturing, given that the current AI paradigm is driven by LLMs and not what you’d expect a factory to need, like robotics.

One explanation is that it’s due to measurement error, and these numbers will be revised down in future releases1. Another is that manufacturing is finally getting over the supply chain issues that plagued it during covid. A third is that demand for goods swings around quarter by quarter, driven by a bunch of non-AI things, and these numbers are the inevitable effect of this noise.

But it also could be early signs of AI creeping off Twitter and into the rest of the economy. It might be expensive or difficult or otherwise complicated for a larger firm to start using AI, but a guy running a bookshop can just open ChatGPT on his laptop and start asking questions. Manufacturing might suffer various informational problems which LLMs can fix.

It’s all very murky, but it’s worth thinking about the fact that output increased a lot and hours worked stayed flat. Businesses (of the non-farm variety) are getting more bank for their buck than ever before this quarter, and this would be consistent with a labour market that is much more cautious about hiring than it is about investing in new technology. If AI were meaningfully boosting output per hour faster than pay, you would expect to see this sort of pattern: firms hire less, productivity increases more than wages.

The boring, epistemically responsible thing to do is to wait for another year or two and see if these trends persist - that would help cancel out much of the noise. The more fun thing to do is extrapolate wildly and start making bets.

Open Source Software in the AI era

Back in the 2000s and early 2010s, a common pattern for tech startups was to:

1. Use a lot of open source software to build a product;

2. Write a lot of their own internal software;

3. Get rich;

4. And along the way start feeling guilty and release some of their own software open-source.

Something like this happened with React and GraphQL at Facebook, Lottie at Airbnb, Kafka at LinkedIn, Kubernetes and TensorFlow at Google, and many many many many many other examples.

More recently, it has run in the other direction: something begins as an open-source library and then a company spontaneously forms around it. This is usually because managing an open-source project is supposed to be a labour of love, but love doesn’t pay very well, and living in the sorts of places that open-source code is often written is expensive, and there are very few people outside of Berlin who can be abused by men with neckbeards on Github and derive any pleasure from it.

One such project is Tailwind CSS.

Tailwind began in 2017 in the Laravel community (another open-source project around which a company was built), offering a new way to style webpages. Web pages are normally styled by pointing the styles at the content; Tailwind flipped this on its head, allowing the content to point to the styles by defining a bunch of attributes that map to common CSS rules.

This was a popular enough idea that the authors went full time on the project in 2018, and setup Tailwind Labs, a for-profit company that makes its revenue from selling UI components, templates, a book, and corporate sponsorship.

Four days ago, Tailwind CSS laid off 75% of its engineering team. The founder, Adam Wathan, announced it in a comment on a pull request which added an llms.txt endpoint to the documentation. He then went on to record an admirably candid podcast episode about the challenges his business is facing: traffic to the documentation is down 40% from early 2023 and revenue is down 80%. And this is all while the framework itself has become wildly popular:

TODO image of weekly downloads

Tailwind’s current business is structurally similar to a media company: create valuable free content, and monetise via adjacencies. But AI is decoupling the way people write software from the traditional channels through which they’d learn about that software, by simply removing the need to learn it. This makes those adjacencies much less adjacent. The framework itself benefits enormously from this, of course: LLMs really like Tailwind, which drives adoption; that code ends up in training corpuses of future models, which makes them like it even more. But the business crashes, because if nobody is visiting your documentation, then nobody is going to discover your templates and books.

The core problem, I think, is that freemium only works when there’s a cost to staying on the free tier (usually time spent figuring it out). AI is radically reducing some of those costs, and thus making it much harder for companies like Tailwind to extract value from the communities they have created.

There are lots of options here. They could lean into sponsorship much more heavily. They could charge for a more aggressive premium version of the framework, and use their npm package to distribute it. They could do a deal with the model companies. They could launch a creator marketplace. They could launch a token. But all of these have obvious problems, and there is little certainty that any of them would provide long-term revenues. Their best bet may be to skip that chapter entirely.

AI-native Error Messages

There are, roughly speaking, two ways in which code can go wrong. It can either fail at compile-time, which happens when the formal rules of the programming language are violated in some way, or it can happen at runtime, when the rules of the program itself are violated in some way.

LLMs are very good at solving compile-time errors, largely because these types of errors are often much simpler: there are a fairly small number of degrees of freedom in a language’s syntax, and whether a piece of code is valid can be verified reliably.

Runtime errors are more complex, because the space of possible questions one needs to ask expands from ‘does this sequence of letters and symbols match the a priori known rules’ to ‘does the functionality described by this sequence of letters and symbols cohere with the state of the program’, and the state of the program is often something that is not known a priori.

Normally, when something goes wrong, we rely on error messages which describe a rough outline of the error. But these messages have so far been designed for humans, who have an existing mental model of the system and the possible states it could be in. Here’s a typical error:

TypeError: Cannot read property ‘id’ of undefined at processUser (/app/services/user.js:47:23)A human with context can look at this and think: okay, I passed some bad data into the id property of processUser, this happened somewhere upstream that I already know about, and I have a rough idea of the conditions under which that thing can get its value.

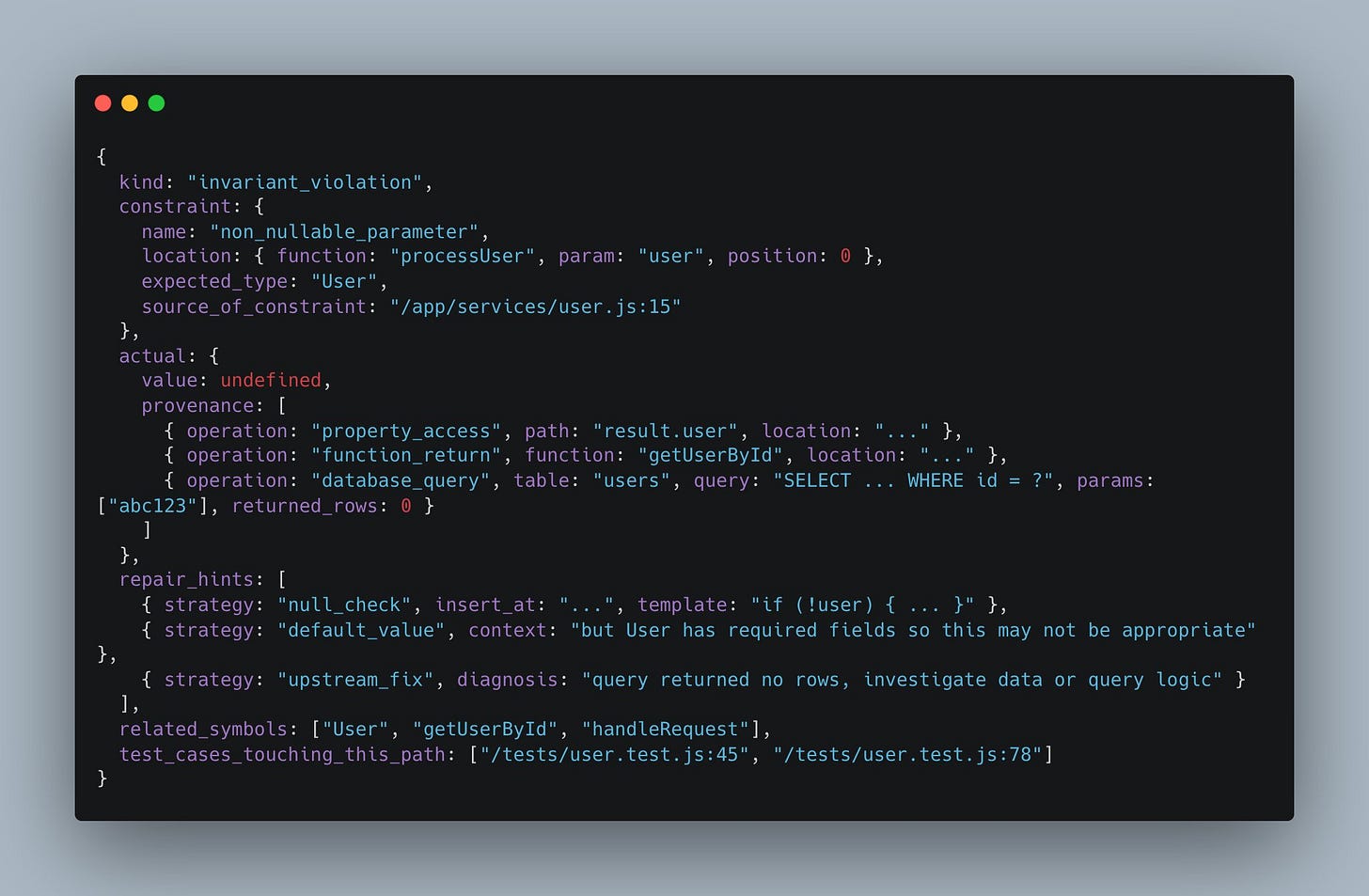

LLMs can do all this, up to a point, but they usually don’t have the same context that a human familiar with the codebase does. So it occurred to me that the next generation of frameworks (and indeed languages) might better optimise for LLMs writing their code by providing much more explicit error messages:

An error message like the above shows the problem, an explicit description of what the program expected, what it received instead, the transformations the data underwent to get there, pointers to nearby code, and a list of possible ways in which the problem might be fixed. All of this information is in principle available to the program at the point it errored, and could be supplemented with natural language hints that a faster, smaller LLM could generate.

The trade-off, of course, is clear: it’s much more expensive to generate a message like this. But if the primary consumer of code is a model, maybe this doesn’t matter? The benefit is that the model will be given a huge amount of detail, and can get to the right fix more quickly. And, on the rare occasion that human engineers look at these errors, that detail will help them too.

The larger point here is that existing programming languages are not in any way designed for LLMs. But we might see a kind of reverse RLHF start to happen, where the languages themselves begin to adapt around their new userbase, and this is a space which has been so far essentially unexplored.

Things happen

The context is the work. Will advanced AI lead to negative economic growth? Anthropic is raising even more money. Gemini is slowly taking over Google. How to game the METR plot, and the illusion of diminishing returns. Promising new AI media, outwith this blog. The UK government successfully does some AI stuff. Will AI prove Piketty right? Brian suggests no. So does Séb. App store subscription apps have grown 15% in the past quarter. Out of sight, out of mind?

This often happens – the release I quoted above revises 2024 non-financial corporate productivity growth by 1.7pp